TimeMixer

Description

This API calls the TimeMixer model, which can be used for time series forecasting (long-term and short-term), anomaly detection, and classification.

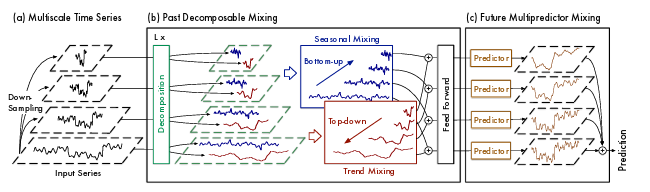

TimeMixer Model Architecture

TimeMixer is an MLP-based model that combines multiscale downsampling with time series decomposition, mixing and ensembling seasonal and trend components according to their respective characteristics to analyze and forecast time series data from multiple perspectives.

Key Features of TimeMixer:

- Multiscale Downsampling

- Generates multi-scale time series via average downsampling. Finer scales preserve seasonal details, coarser scales reveal trend patterns.

- Past-Decomposable-Mixing (PDM)

- Decomposes each scale into seasonal/trend components and mixes them in opposite directions — seasonal fine→coarse, trend coarse→fine.

- Future-Multipredictor-Mixing (FMM)

- Ensembles predictions from each scale, combining fine-scale seasonal with coarse-scale trend for more accurate forecasting.

For more details on the model architecture, refer to the paper.

API module path

from api.v2.model.TimeMixer import TimeMixer

Parameters

task_name

- Specifies the task to perform. Choose from long_term_forecast, short_term_forecast, anomaly_detection, imputation, or classification.

- Example

- task_name = 'long_term_forecast'

seq_len

- Specifies the input sequence length (number of past time steps).

- Example

- seq_len = 96

label_len

- Specifies the label length (overlap between input and output for decoder).

- Example

- label_len = 48

pred_len

- Specifies the prediction length (number of future time steps to forecast).

- Example

- pred_len = 96

enc_in

- Specifies the number of input features/variables.

- Example

- enc_in = 7

c_out

- Specifies the number of output features/variables.

- Example

- c_out = 7

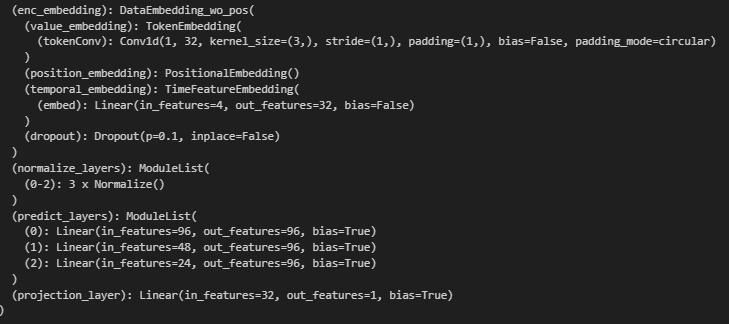

d_model

- Specifies the model dimension (embedding size).

- Example

- d_model = 32

d_ff

- Specifies the feed-forward network dimension.

- Example

- d_ff = 32

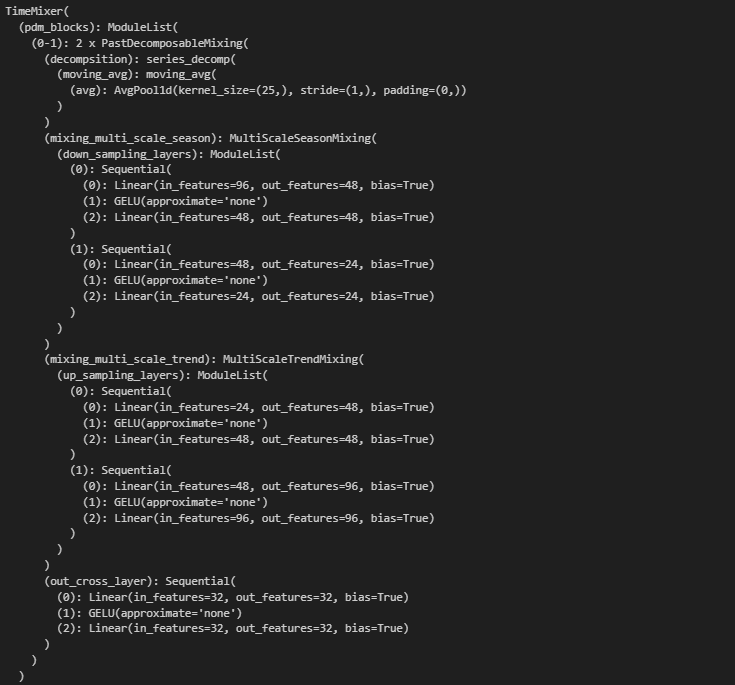

e_layers

- Specifies the number of encoder layers (PDM block iterations).

- Example

- e_layers = 2

dropout

- Specifies the dropout rate (0.0 ~ 1.0).

- Example

- dropout = 0.1

down_sampling_window

- Specifies the down-sampling window size for multi-scale decomposition.

- Example

- down_sampling_window = 2

down_sampling_layers

- Specifies the number of down-sampling layers. The total number of scales is down_sampling_layers + 1.

- Example

- down_sampling_layers = 2

down_sampling_method

- Specifies the down-sampling method. Choose from avg, max, or conv.

- Example

- down_sampling_method = 'avg'

decomp_method

- Specifies the decomposition method. Choose from moving_avg or dft_decomp.

- Example

- decomp_method = 'moving_avg'

moving_avg

- Specifies the kernel size for moving average. Used when decomp_method is moving_avg. Odd number recommended.

- Example

- moving_avg = 25

top_k

- Specifies the top-k frequencies for DFT decomposition. Used when decomp_method is dft_decomp.

- Example

- top_k = 5

embed

- Specifies the embedding type. Choose from timeF, fixed, or learned.

- Example

- embed = 'timeF'

freq

- Specifies the time frequency. Choose from s (sec), t (min), h (hour), d (day), w (week), or m (month).

- Example

- freq = 'h'

use_norm

- Specifies whether to enable normalization. 1 (enable) or 0 (disable).

- Example

- use_norm = 1

use_future_temporal_feature

- Specifies whether to use future temporal features for prediction. True or False.

- Example

- use_future_temporal_feature = False

Example Sample Code (Python)

Results

Check the entire module code